In a recent test of Apple's MLX machine learning framework, a benchmark shows how the new Apple Silicon Macs compete with Nvidia's RTX 4090.

Apple announced on December 6 the release of MLX, an open-source framework designed explicitly for Apple silicon. It's meant for AI developers to build upon, test, use, and enhance within their projects.

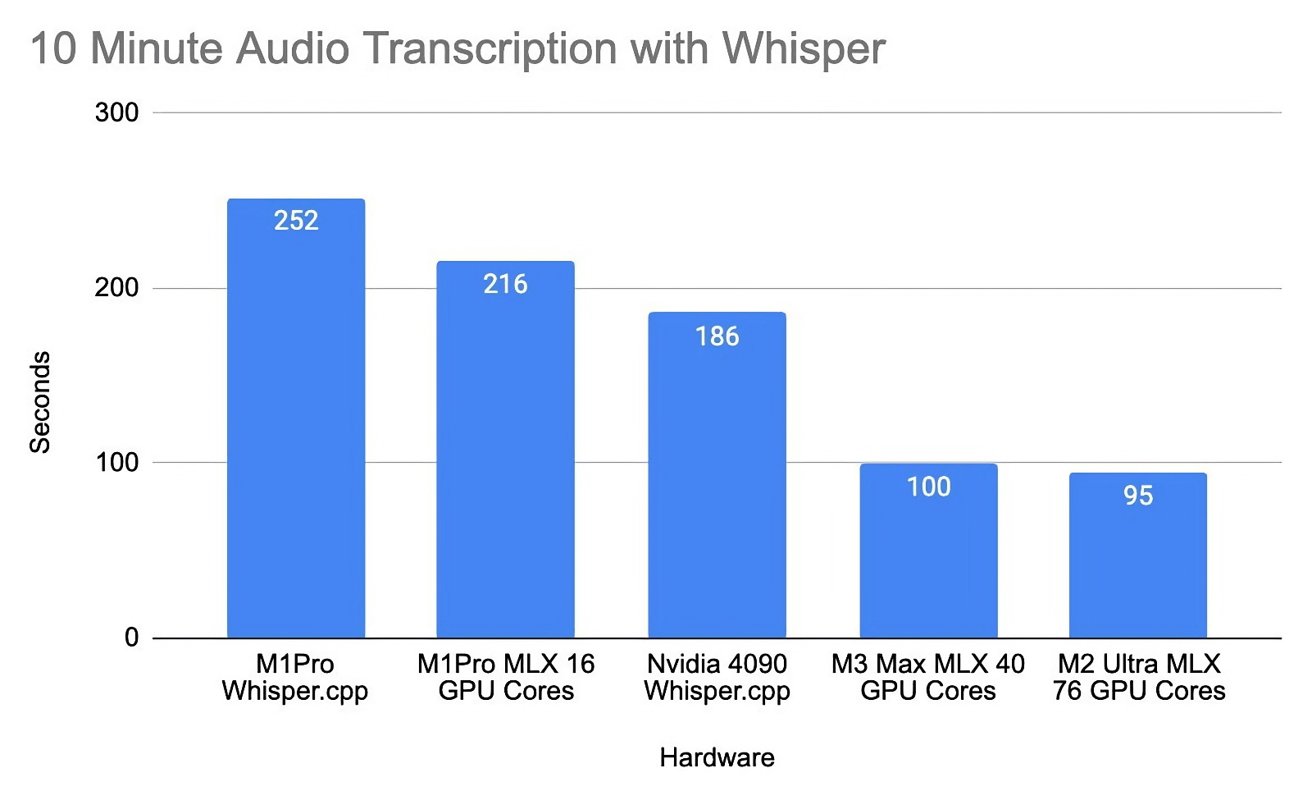

Developer Oliver Wehrens recently shared some benchmark results for the MLX framework on Apple's M1 Pro, M2, and M3 chips compared to Nvidia's RTX 4090 graphics card. It makes use of Whisper, OpenAI's speech recognition model.

Wehrens uses the Whisper model for transcribing speech and measures the time it takes to process a 10-minute audio file. Results show that the M1 Pro chip doesn't quite meet the Nvidia GPU's performance, taking 216 seconds to process the audio compared to the 4090's 186 seconds.

However, newer Apple chips have much better performance. For instance, a different person ran the same audio file on an M2 Ultra with 76 GPUs and an M3 Max featuring 40 GPUs and found that these chips transcribed the audio transcription in less time than the Nvidia GPU.

There is also a significant difference in power consumption between Apple's chips and Nvidia's offering. Specifically, when comparing the power usage of a PC with an Nvidia 4090 running versus its idle state, there's an increase of 242 watts.

In contrast, a MacBook with 16 M1 GPU cores shows a much smaller increase in power usage when active compared to its idle state, with a difference of just 38 watts.

The results highlight Apple's gains in AI and machine learning capabilities and could be the beginning of better capabilities for Apple products. With the MLX framework now open-source, it paves the way for broader application and innovation for developers.

Nvidia's 4090 GPU starts at $1,599 just for the card, without a PC. This is the same price as the M3 MacBook Pro from 2022 — but prices increase rapidly for M3 Pro and M3 Max.

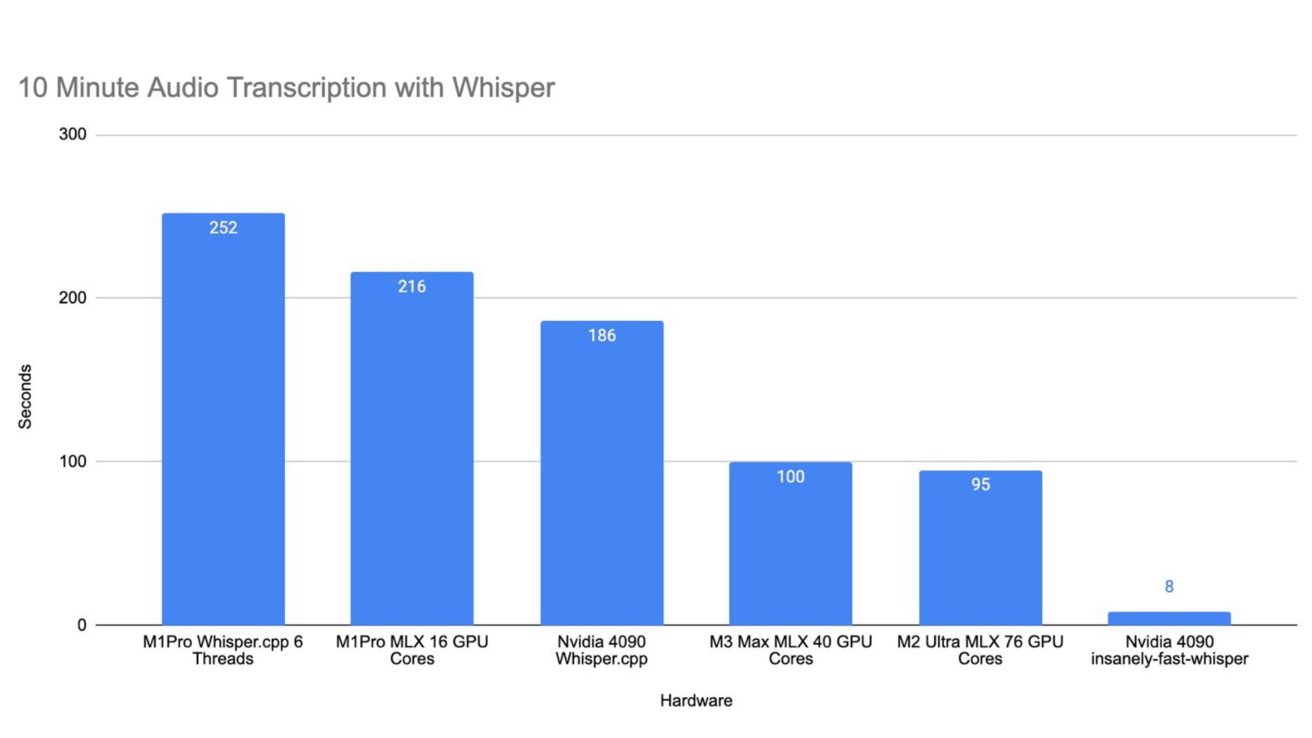

An update to Wehrens' blog post changed the story. The M3 chips still performed well, but Nvidia cut its times by more than half when using appropriately optimized benchmark tools.

We're leaving the original story intact since it reflects how the non-optimized tool performed. However, Apple's processors still have a way to go to match Nvidia's when it comes to AI transcripts.

The most interesting factor to note is the difference in power consumption. That result didn't change — Apple's chips performed well at a fraction of Nvidia's power draw.

With the new results from the Nvidia-optimized tool, the transcript is completed in 8 seconds. M1 Pro took 263 seconds, M2 Ultra took 95 seconds, and M3 Max took 100 seconds.

Apple's results were still impressive, given the power draw, but still didn't match Nvidia's. Apple Silicon still has some room to improve, but it's getting there.

Updated December 13, 6:50 p.m. ET: Original post used a non-optimized benchmark showing inaccurate results.