A global registry tracking the flow of chips destined for AI supercomputers is one of the policy options highlighted by a major new report calling for regulation of "compute"—the hardware that underpins all AI—to help prevent artificial intelligence misuse and disasters.

Other technical proposals floated by the report include "compute caps"—built-in limits to the number of chips each AI chip can connect with—and distributing a "start switch" for AI training across multiple parties to allow for a digital veto of risky AI before it feeds on data.

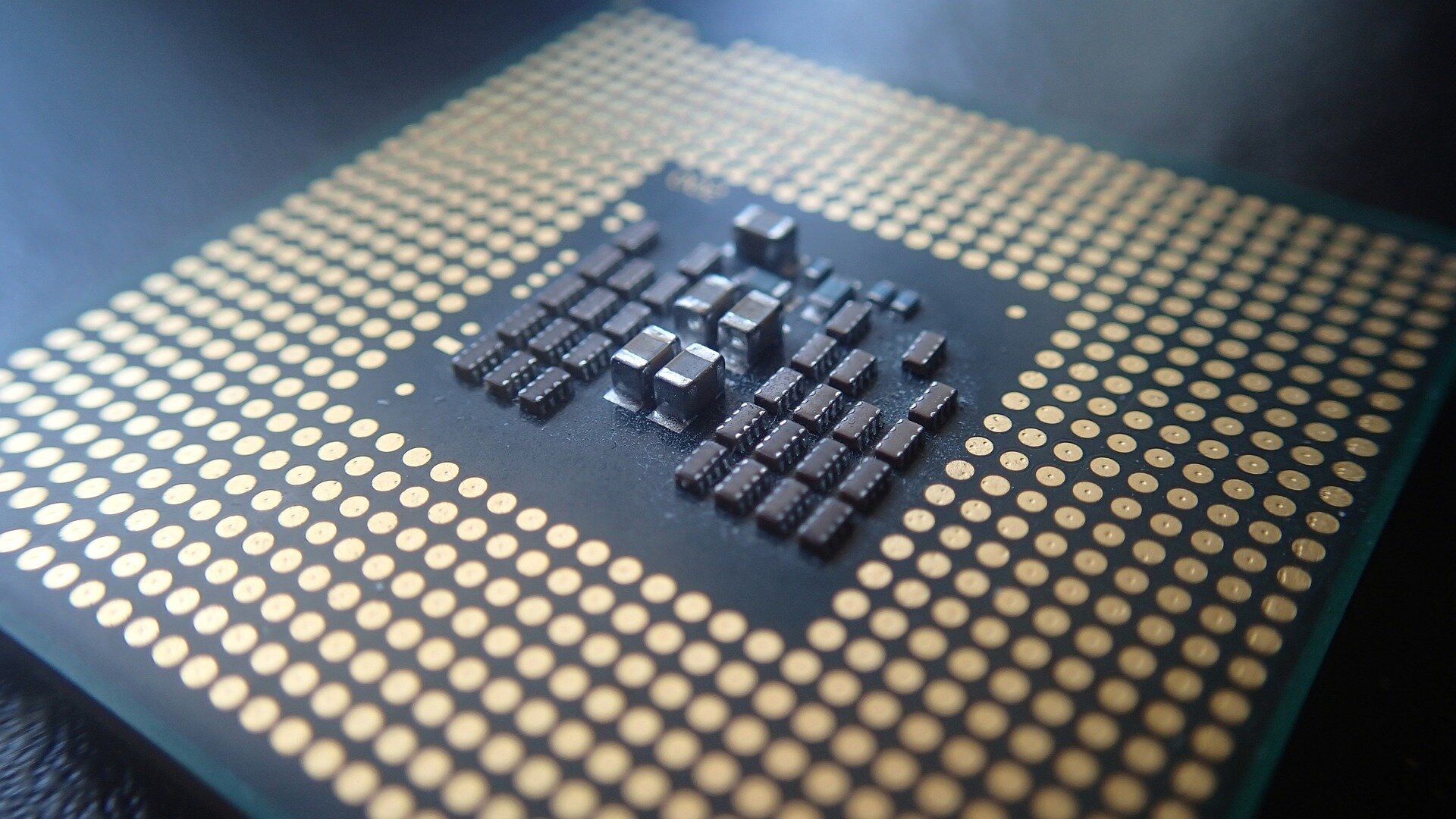

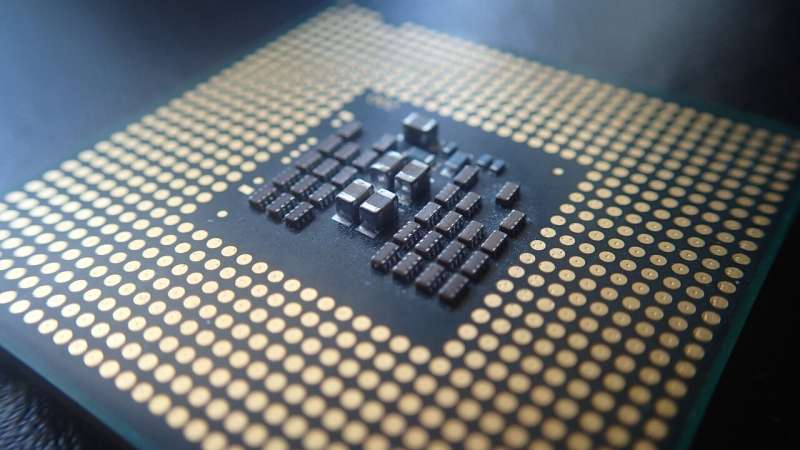

Researchers argue that AI chips and datacenters offer more effective targets for scrutiny and AI safety governance, as these assets have to be physically possessed, whereas the other elements of the "AI triad"—data and algorithms—can, in theory, be endlessly duplicated and disseminated.

The experts point out that powerful computing chips required to drive generative AI models are constructed via highly concentrated supply chains, dominated by just a handful of companies—making the hardware itself a strong intervention point for risk-reducing AI policies.

The report is authored by nineteen experts and co-led by three University of Cambridge institutes—the Leverhulme Center for the Future of Intelligence (LCFI), the Center for the Study of Existential Risk (CSER) and the Bennett Institute for Public Policy—along with OpenAI and the Center for the Governance of AI.

"Artificial intelligence has made startling progress in the last decade, much of which has been enabled by the sharp increase in computing power applied to training algorithms," said Haydn Belfield, a co-lead author of the report from Cambridge's LCFI.

"Governments are rightly concerned about the potential consequences of AI, and looking at how to regulate the technology, but data and algorithms are intangible and difficult to control.

"AI supercomputers consist of tens of thousands of networked AI chips hosted in giant data centers often the size of several football fields, consuming dozens of megawatts of power," said Belfield.

"Computing hardware is visible, quantifiable, and its physical nature means restrictions can be imposed in a way that might soon be nearly impossible with more virtual elements of AI."

The computing power behind AI has grown exponentially since the "deep learning era" kicked off in earnest, with the amount of "compute" used to train the largest AI models doubling around every six months since 2010. The biggest AI models now use 350 million times more compute than thirteen years ago.

Government efforts across the world over the past year—including the US Executive Order on AI, EU AI Act, China's Generative AI Regulation, and the UK's AI Safety Institute—have begun to focus on compute when considering AI governance.

Outside of China, the cloud compute market is dominated by three companies, termed "hyperscalers": Amazon, Microsoft, and Google.

"Monitoring the hardware would greatly help competition authorities in keeping in check the market power of the biggest tech companies, and so opening the space for more innovation and new entrants," said co-author Prof Diane Coyle from Cambridge's Bennett Institute.

More information: Computing Power and the Governance of Artificial Intelligence. www.cser.ac.uk/media/uploads/f … Governance-of-AI.pdf

Citation: Aim policies at hardware to ensure AI safety, say experts (2024, February 14) retrieved 14 February 2024 from https://techxplore.com/news/2024-02-aim-policies-hardware-ai-safety.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no part may be reproduced without the written permission. The content is provided for information purposes only.